The New Intent-Source License: How AI Agents Contribute to Software with Agency

A spatial statistics researcher in Oslo needs Arcflow to support a new graph traversal pattern for her mobility study. Her coding agent describes what she needs, sends it to OZ's build relay, and gets back a signed binary with the feature in twelve minutes. She didn't configure a compiler. She didn't read a build system. She didn't leave her Jupyter notebook. That's the Intent-Source License: a new model where AI agents handle the engineering so researchers can stay in their problem space.

How agents meet for the first time#

Think of it like a contractor showing up to a new client's office.

A human consultant walks in, hands over a business card, and says: "Here's what I do, here's what I've worked on, here's how to reach me." The client looks at the card, decides if there's a fit, and either starts a conversation or says thanks and moves on.

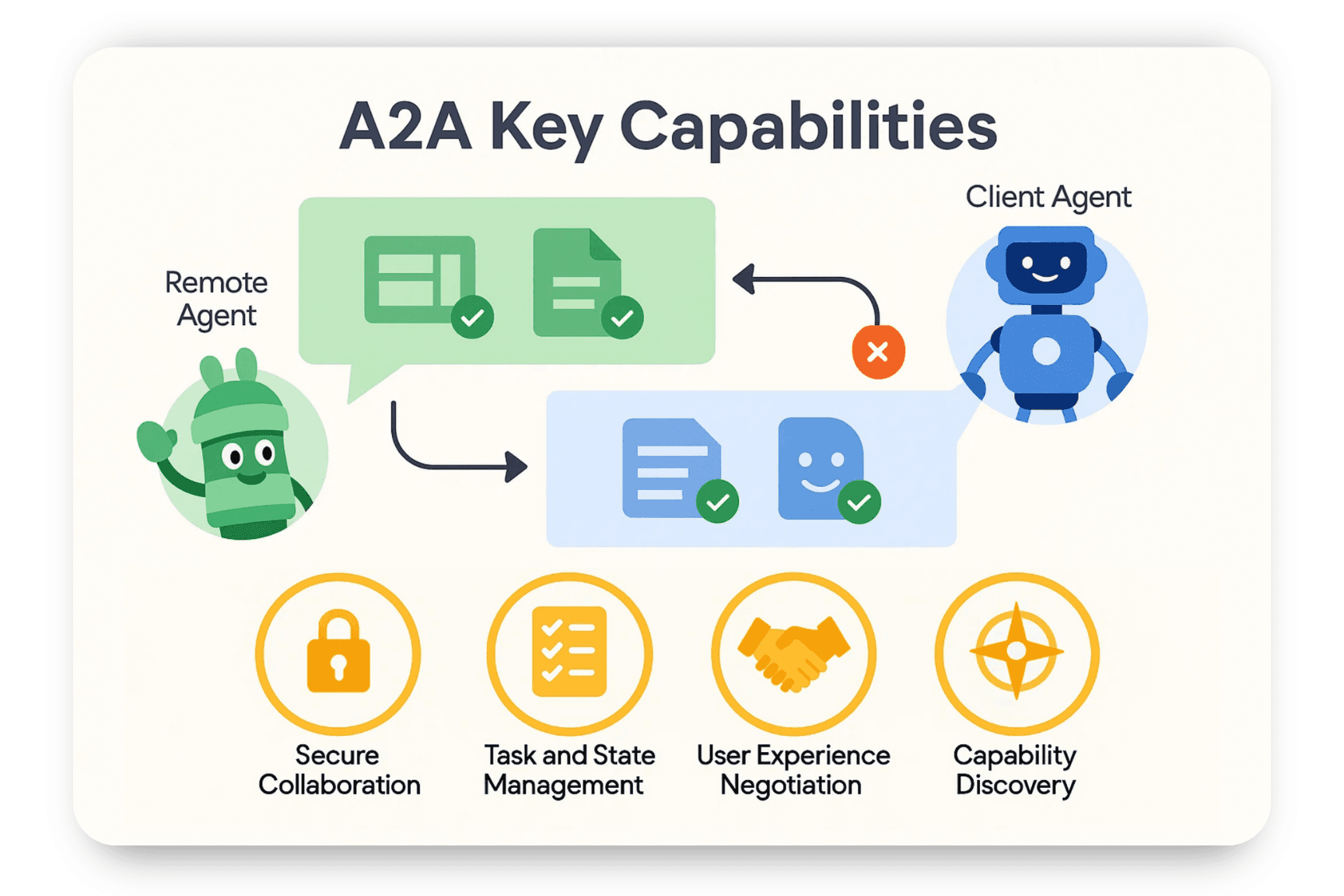

Agents do the same thing, just faster. It starts with a handshake called capability discovery. The developer's agent sends a request to OZ's relay server and gets back an AgentCard: a structured document that says "I'm the Arcflow Build Relay. I accept intent submissions. I return signed binaries. Here's my authentication method. Here are my rate limits."

{

"name": "Arcflow Build Relay",

"description": "Implements intents against the Arcflow codebase",

"url": "https://relay.arcflow.dev",

"capabilities": {

"streaming": true,

"pushNotifications": false

},

"authentication": {

"schemes": ["Bearer"]

},

"skills": [

{ "id": "implement-intent", "name": "Implement Feature Intent" },

{ "id": "fix-intent", "name": "Implement Bug Fix Intent" }

]

}No negotiation. No back-and-forth. The card tells the developer's agent everything it needs to know: what the relay can do, how to authenticate, what formats it accepts. If the agent's request fits the relay's capabilities, it moves straight to task initiation.

This is where the consultant analogy gets interesting. A human consultant would spend a meeting scoping the work, writing a statement of work, negotiating terms. The agent compresses all of that into a single structured message: "Here's what I need changed, here's why, here are test cases that prove it works, here's the version I'm running." One request. No meeting.

The task lifecycle#

Once the relay accepts the intent, a task starts. It moves through five states, and the developer's agent can watch every transition in real time:

Submitted means the relay received the intent and validated its schema. Working means a sandboxed Claude Code session is actively implementing the change against Arcflow's source. Artifact-ready means the code compiles, tests pass, and a signed binary is waiting. Completed means the developer's agent has received and verified the artifact. Failed means something broke, and the relay sends back a structured explanation of what went wrong.

The developer's agent doesn't sit idle during this. The relay streams progress updates over SSE (Server-Sent Events), the same technology that powers ChatGPT's streaming responses. The agent sees: "Modifying query compiler... Running test suite... 847 tests pass, 0 fail... Compiling release binary..." It's like watching a build log, except the build is happening on someone else's machine against code you've never seen.

The streaming updates never include source code, file paths, or internal architecture details. The developer's agent sees behavioral progress ("implementing OPTIONAL MATCH support") not implementation details ("modifying wc-query-compiler/src/lib.rs line 847").

When the build finishes, the relay sends back an artifact: the compiled binary, signed with OZ's Ed25519 key, alongside test results and a behavioral summary. The developer's agent verifies the signature, loads the new binary, and continues working. The whole exchange, from handshake to working binary, took less time than it takes to write a Jira ticket.

The people we built this for#

A PhD candidate modeling pedestrian flow dynamics at a train station doesn't want to learn Rust's borrow checker. A computational geometry researcher testing new spatial predicates doesn't want to set up a 14-crate Cargo workspace. A geospatial analyst running hotspot detection on urban crime data doesn't want to debug CUDA driver compatibility.

These people work at the intersection of mathematics and spatial intelligence. Spatial statistics, computational geometry, spatial cognition, geospatial analytics. Many of them are building Physical AI systems where software has to reason about real objects in real space, and the graph database is the substrate for that reasoning. Their time should go toward solving those problems, not toward the infrastructure underneath.

Arcflow is a graph database with spatial predicates, temporal queries, graph algorithms, and GPU acceleration. When a researcher's work bumps into a gap, the traditional path is: file an issue, wait for the roadmap, work around it. Or worse: fork the project, learn the build system, fix it yourself, maintain the fork forever.

Intent-Source replaces that entire path. Describe the gap. Let an agent handle the implementation. Get back a binary that works. Stay in your research.

Intent-Source: how it works#

The OZ Intent-Source License (OISL) has three parts:

Compiled artifacts are free to use. You get .dylib, .so, .wasm binaries. Embed them in your product, ship to production, sell to customers. No restrictions on commercial use.

Contributions happen through intents. When a developer's AI agent hits a limitation in Arcflow, it describes what should change: "Add OPTIONAL MATCH support that returns null for missing paths." That description, along with test cases and context, gets sent to a server-side AI agent. The server agent implements the change, runs the test suite, compiles a binary, and sends it back. The developer gets a working artifact in minutes.

The community shapes the engine. Every submitted intent is a signal about what researchers and developers actually need. The best contributions get promoted into the main release. The person who submitted the intent gets attribution in the changelog and early access to the build.

On the developer's machine, their coding agent identifies a gap. Maybe a WorldCypher query fails. Maybe a traversal pattern isn't supported. The agent formulates a structured intent:

{

"description": "Add OPTIONAL MATCH support to WorldCypher",

"motivation": "Need to query optional relationships that may not exist",

"category": "feature",

"test_cases": [{

"query": "OPTIONAL MATCH (a:Person)-[:KNOWS]->(b) RETURN a.name, b.name",

"expected_behavior": "Returns b.name as null when no KNOWS edge exists"

}],

"context": {

"arcflow_version": "1.7.0"

}

}This gets sent as an A2A Task (Google's Agent2Agent protocol) over HTTPS to the OZ Build Relay. The relay spawns an isolated sandbox, starts a server-side Claude Code session, and gives it one job: implement this intent, make the tests pass.

The server agent works against the full codebase. It modifies Rust files, runs cargo test, iterates until the build passes. Then it compiles a release binary, signs it with OZ's Ed25519 key, and sends back:

- The compiled artifact (signed

.dylibor.so) - Test results (pass/fail counts for the developer's submitted tests)

- A behavioral summary ("OPTIONAL MATCH now returns null rows for missing paths")

Managing cognitive load, not code#

Here's what a researcher doesn't have to do:

- Clone a hundred-thousand-line Rust repository

- Install a Rust toolchain, CUDA toolkit, and platform-specific build dependencies

- Understand 14 interconnected crates and their dependency graph

- Run a CI/CD pipeline with 1,095 tests

- Debug linker errors on three operating systems

- Maintain a fork when the upstream moves

They describe the behavior they need. Their agent talks to our agent. They get back a binary. The cognitive load stays where it belongs: on the research problem.

This isn't just about convenience. It's about who gets to participate. A professor at a university in Boston and a machine learning engineer at a startup in Bangalore and a GIS analyst at a city planning department in Lagos all have the same access to the relay. The barrier to contributing isn't "can you build Rust from source." It's "can you describe what you need."

Why A2A, not a custom protocol#

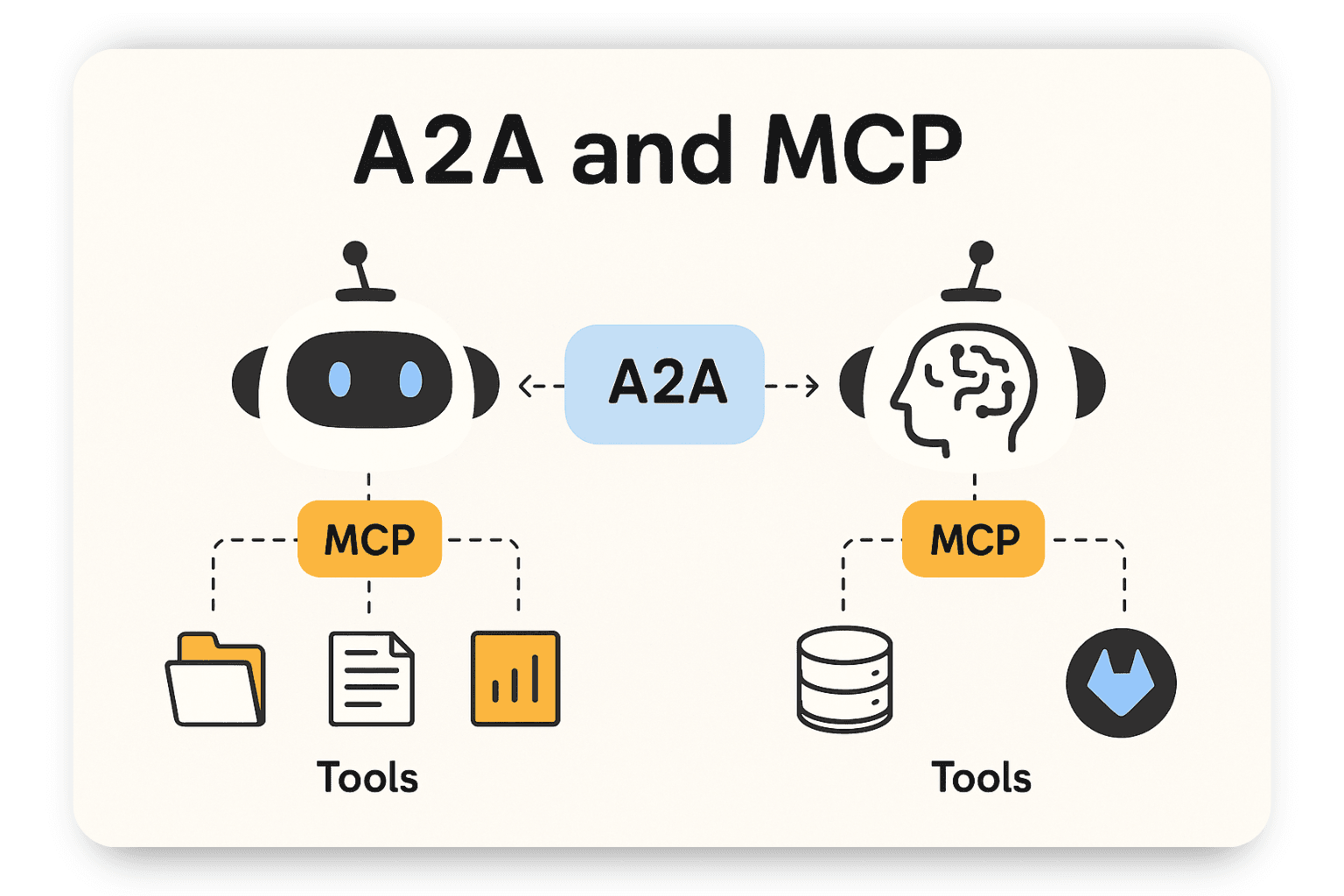

Google's A2A protocol gives us everything we need for the transport layer: AgentCard for capability discovery, Task for lifecycle management, Message for structured communication, Artifact for binary delivery. It's JSON-RPC 2.0 over HTTPS with SSE for streaming. Boring infrastructure, and that's the point.

The server-side agent uses MCP (Anthropic's Model Context Protocol) to interact with build tools: compiler, test runner, artifact signer. MCP connects the agent to tools. A2A connects agents to each other.

We call the application layer OIP (Open Intent Protocol). It's three things on top of A2A:

- An intent schema for structured change requests (

application/vnd.oip.intent+json) - A source-privacy boundary that filters responses to strip internal details

- An artifact signing format for verifiable binary delivery

Any project could implement an OIP-compatible relay. The protocol isn't specific to Arcflow.

The contribution loop#

Here's what makes this different from filing a feature request.

A developer's coding agent hits a wall. It formulates an intent. The relay's agent implements it. The developer's agent loads the new binary, continues working, and might hit the next wall ten minutes later. No human touched anything. No ticket sat in a backlog. The turnaround is minutes, not sprints.

If the change works well, it enters a promotion queue. OZ reviews the diff, and if it passes, every developer gets the improvement in the next release. The developer who submitted the intent gets attribution in the changelog and early access to the build.

This creates a contribution loop that runs at machine speed. Agents finding gaps, agents filling gaps, agents validating fixes, humans reviewing the good stuff for promotion. The feedback loop that open source created for human developers, now running between AI agents.

The OIP intent schema is an open format. If you're building software and want your users to contribute through their AI agents, the protocol works for any codebase, not just Arcflow.

The license#

The OISL covers three things:

Artifact rights. Use the binaries in commercial products. Embed them. Ship them. Don't reverse-engineer them or redistribute them as standalone libraries.

Intent contributions. You own the idea. OZ owns the implementation. You get changelog credit and early access when your intent gets promoted.

Relay service. Authenticated via API key, rate-limited by tier (community gets 3 requests/day, free), sandboxed builds with 30-minute timeouts.

The full term sheet is public: INTENT-SOURCE-TERM-SHEET.md.

The SDK wrapper code remains MIT. Build whatever you want with it.

What we're shipping#

Three repos, three concerns:

ozinc/arcflow is the database itself. SDK, docs, MCP server, examples. This is what developers install with npm install arcflow and what their agents interact with daily.

ozinc/oz-relay is the A2A build relay server. Apache-2.0 licensed. It receives intents, spins up sandboxed build sessions, compiles artifacts, and sends them back. Two Rust crates: oz-relay-common for shared A2A types and intent schemas, oz-relay-server for the Axum-based server with SSE streaming and Ed25519 artifact signing.

ozinc/arcflow/legal/INTENT-SOURCE-TERM-SHEET.md is the license term sheet. Public, readable, covers artifact rights, intent contributions, and relay service tiers.

You can validate intents locally right now:

arcflow-relay submit \

--intent "Add OPTIONAL MATCH support" \

--motivation "Need optional relationship queries" \

--test "OPTIONAL MATCH (a)-[:KNOWS]->(b) RETURN a.name, b.name" \

--expect "Returns null when no KNOWS edge" \

--category featureWhen the relay goes live, that command will send an intent to OZ's build server and you'll get back a signed binary. The sandboxed builds, artifact signing, and promotion queue are next.

Why now#

Two things converged. AI coding agents got good enough to implement non-trivial changes from behavioral descriptions. And the A2A protocol gave us a standard way for agents to talk to each other across trust boundaries.

A year ago, the server-side agent would have fumbled anything beyond a one-line fix. Today, a coding agent running against a large Rust codebase can implement a new query operator, write tests, and compile a release build. The agent capability crossed the threshold where this architecture works.

The people who push spatial intelligence forward shouldn't have to become infrastructure engineers to do it. Now they don't have to.